AI poses risks for international higher education

Bhaso Ndzendze

26 April 2026

I recently had the opportunity to make a keynote presentation at a workshop headlined by the Minister and Deputy Minister of Higher Education (23-24 April 2026), at the invitation of the department (DHET). The theme of the workshop was “Enhancing Strategic International Partnerships and Alliances Cooperation for the PSET system.” Attendees spanned much of the sector, with Deputy Vice-Chancellors, senior directors of internationalisation and partnerships, government officials, and the bureaucrats who make our systems tick; from the SAQA to USAf.

In my presentation, titled ‘Disrupting Education as we know it: The story of AI,’ I made four key points:

- AI is a comprehensive disruptor of education, playing out at two levels.

- AI has emerging implications for the internationalisation of higher education.

- The recently-released draft National AI Policy needs clear input from the sector.

- South Africa has an opportunity to shape the international regulatory debate.

By now, the problem of AI in higher education is well known across the sector, to all stakeholders. But what has not yet come to the fore is AI as a challenge to the internationalisation of higher education. It is in this regard that I argued the first two points, before making the latter two. In a sense the former set of arguments diagnose the problem, and the latter takes stock of the regulatory response.

No less an entity than UNESCO has observed the double-edged sword nature of AI in higher education, and the challenge it poses for regualors. In its guidelines, it cautions that “Artificial Intelligence (AI) has the potential to address some of the biggest challenges in education today, innovate teaching and learning practices, and accelerate progress towards SDG 4. However, rapid technological developments inevitably bring multiple risks and challenges, which have so far outpaced policy debates and regulatory frameworks” (UNESCO, 2025).

My argument is that AI is a two-level disruptor in (international) higher education. Firstly, as a process disruptor, it has turned the very fundementals of teaching, learning and assessing, by muddying the research process and reliability of literature. On both scores, it calls question standards, quality assurance and the very trust building processes across the sector and globally that serve as a basis for higher education harmonisation across jurisdictions.

Secondly, AI as an outcomes disruptor affects the employability of students, by calling into question the credibility of students’ qualifications. Employers across the globe are bound to ask whether the students really did the work to obtaint he qualification (per the process disruption described above); but that already assumes there is a market willing to absorb the graduates – just as easily, the market is coming to believe it can allocate certain functions to AI processes and agents. Universities have had to do soul-searching on the basis of these questions. But it also has implications for how they collaborate. For example:

- How will partner universities put together joint programmes when two or more of the institutions have different philosophies towards AI?

- How confidently will institutions continue to send or accept international students for semesters abroad when they are not certain about the receiving or sending institution’s AI policies, and not be concerned about ‘gaps’ in their future graduates’ transcripts?

- How will qualification authorities such as SAQA, whose mandate is to validate the degrees of international students or returning South African students, carry out their due diligence towards possibly AI-assited qualifications?

There have been varying responses across different disciplines and institutions. One of the more drastic, but worthwhile, is presented by Smith in a forthcoming (2027) article in which he argues that “[A]uthors and law journals resist by writing and publishing fake scholarship. Doing so may corrupt the body of scholarship upon which language models train, leading to increasingly inaccurate outputs that render generative AI useless for legal scholarship and other forms of legal writing” (Smith, 2027). This is the so-called AI poisinng in practice!

South Africa’s policy response to AI

The South African government, through the Department of Communication and Digital Technologies (DCDT) has put out a draft National AI Policy for public comment. Ironically, the policy has since come under scrutiny for having fabricated publications in its references; a typical give-away for AI-generated work.

The policy’s aims are five-fold, with two of them having clear-cut implications for higher education and its internationalisation.

1. Increased uptake of AI technologies in public, private, society, and other sectors.

2. Enhanced institutional capacity for AI governance and regulation.

3. Growth in local AI innovation ecosystems and job creation.

4. Reduction in the digital divide through equitable access to AI education, technologies, and services.

5. Stronger national positioning in global AI discussions and partnerships.

In what it calls Strategic Building Block (SBB) 1 (Education, Training and Industry Collaboration), the policy aims to “ensure that South Africa has a robust AI talent pool” (p. 41), and recommenends that “South Africa must urgently expand its human capital base to compete in the AI era. This requires a National AI Skills Development Strategy spanning schools, TVET colleges, universities, and lifelong learning pathways.” Additionally, the policy recomments that the government introduce competitive AI research grants, innovation challenges, and fellowships. In parallel, diaspora engagement programmes can facilitate knowledge transfer and mentorship, while targeted AI entrepreneurship training and incubation hubs will strengthen the pipeline of innovators and startups.

In Strategic Building Block (SBB) 2 (Global Collaboration and Competitiveness), the policy aims to position South Africa as a regional AI leader, by having the country build AI research facilities and partnerships with sub-Saharan and other continental partners. “This involves the creation of several AI hubs in South Africa, such as those at the University of Johannesburg (UJ), Tshwane University of Technology (TUT), Central University of Technology (CUT), and a military-focused hub at Stellenbosch University. These hubs will enable innovation in industries such as agriculture, healthcare, finance, and defence,” it says (p. 68).

The document then lays out a list of entities which it says will play key defined roles in the regulation of AI in South Africa, as well as their roles and contributions in the AI ecosystem.

- Department of Communications and Digital Technologies (DCDT)

- Department of Science, Technology and Innovation (DSTI)

- Council for Scientific and Industrial Research (CSIR)

- Technology Innovation Agency (TIA)

- Industrial Development Corporation (IDC)

- South African Human Rights Commission (SAHRC)

- Information Regulator (South Africa)

Evidently, and despite the number of times DHET-facing issues it is cognisant of (and the questions I have raised above), the draft policy does not mention the department. This is despite education being the area which has most immediately been impacted by the emergence of AI, particularly generative AI.

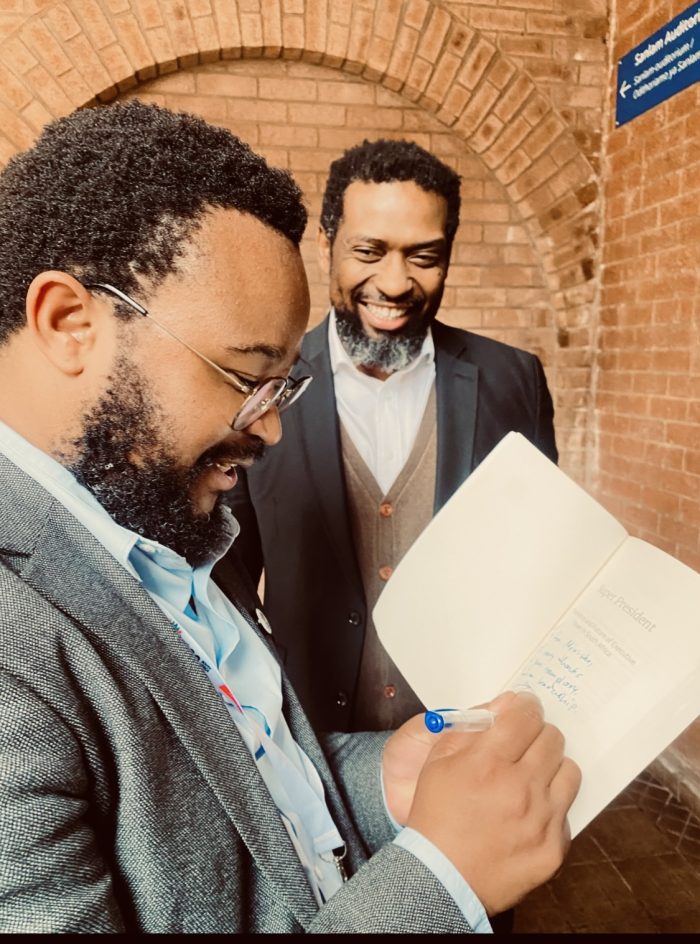

In my book, Super President: The History and Future of Executive Power, the argument is made regarding mandate clarity within cabinet, an institution to which the country (and its international partners) look for direction and execution of policies. It would be a major oversight, and an exercise in duplication, if the DHET were to not have a role in the implementation of the policy or to have its own siloed approach. I raised this in my presentation too.

The minister and the deputy minister graciously took both points well.